In software engineering, don't repeat yourself (DRY) is a principle of software development aimed at reducing repetition of code. All the installed packages and apps we use in our Python/Django projects are great example of DRY concept.

In this article we will learn:

- How to create custom middleware in Django project.

- How to create reusable Django App / Python Package to block the IPs.

- How to upload the python package on pypi and djangopackages.org.

Code of the developed Django app in article is hosted on Github. Package is available on pypi and djangopackages.

Creating custom middleware:

If you are using Django older than 1.10, then process will be little different. We have used Django 2.0 for this example.

First create a project. We strongly recommend to use virtual environment and use Django 2.0 for this project.

Create an app django_bot_crawler_blocker and add it to the list of installed apps in settings.py file.

Complete code is available on Github.

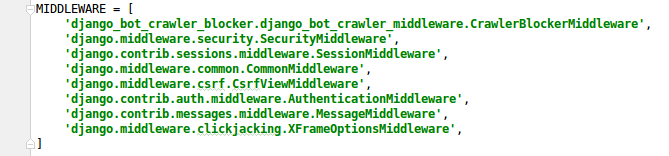

Middleware classes are listed in your Django project's settings.py file.

MIDDLEWARE = [

'django.middleware.security.SecurityMiddleware',

'django.contrib.sessions.middleware.SessionMiddleware',

'django.middleware.common.CommonMiddleware',

'django.middleware.csrf.CsrfViewMiddleware',

'django.contrib.auth.middleware.AuthenticationMiddleware',

'django.contrib.messages.middleware.MessageMiddleware',

'django.middleware.clickjacking.XFrameOptionsMiddleware',

]

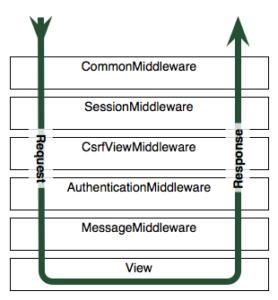

The code in these classes is executed for each request and response. Ordering of these layers matters a lot as request propagates from top middleware to bottom middleware and then response travels from bottom middleware to top middleware.

To create your own middleware, create a file django_bot_crawler_middleware.py in your app.

Define a new class in this file.

class CrawlerBlockerMiddleware(object):

def __init__(self, get_response=None):

self.get_response = get_response

# One-time configuration and initialization.

In Django <=1.9 __init__() didn’t accept any arguments. To allow our middleware to be used in Django 1.9 and earlier, we will make get_response an optional argument.

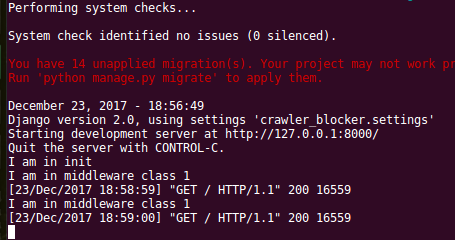

__init__() method is executed only once when server starts. To test it add a print statement to this method.

Now add the __call__() function to your class.

def __call__(self, request):

# Code to be executed for each request before

# the view (and later middleware) are called.

response = self.get_response(request)

# Code to be executed for each request/response after

# the view is called.

return response

To test your middleware class, add a print statement at first line in this method.

class CrawlerBlockerMiddleware(object):

def __init__(self, get_response=None):

print("I am in init")

self.get_response = get_response

# One-time configuration and initialization.

def __call__(self, request):

print("I am in middleware class 1")

# Code to be executed for each request before

# the view (and later middleware) are called.

response = self.get_response(request)

# Code to be executed for each request/response after

# the view is called.

return response

Add your middleware class to list of middleware classes in settings.py file.

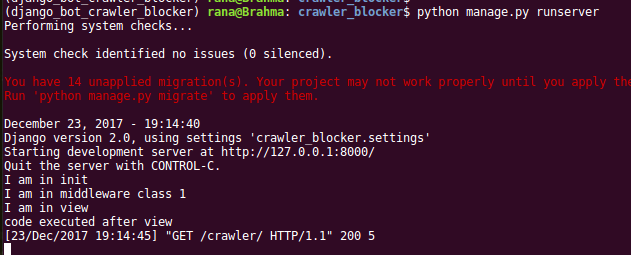

Now start your server and go to localhost:8000 . You can see the print statements in terminal. __init__ method is called only once when server starts while __call__ method is called on every request.

Similarly you can test the order of execution by putting print statement in lower part of method and a print statement in some view method.

Complete code is available on Github.

Creating IP blocking app:

We have already completed the hard part. Now we know that our middleware will be executed for each request. Let put the code to check the hits made by some particular IP in given time and block if requests made are more than set limit.from django.core.cache import cache

from django.http import HttpResponseForbidden

from django.conf import settings

class CrawlerBlockerMiddleware(object):

def __init__(self, get_response=None):

print("I am in init")

self.get_response = get_response

# One-time configuration and initialization.

def __call__(self, request):

print("I am in middleware class 1")

# get the client's IP address

x_forwarded_for = request.META.get('HTTP_X_FORWARDED_FOR')

ip = x_forwarded_for.split(',')[0] if x_forwarded_for else request.META.get('REMOTE_ADDR')

# unique key for each IP

ip_cache_key = "django_bot_crawler_blocker:ip_rate" + ip

ip_hits_timeout = settings.IP_HITS_TIMEOUT if hasattr(settings, 'IP_HITS_TIMEOUT') else 60

max_allowed_hits = settings.MAX_ALLOWED_HITS_PER_IP if hasattr(settings, 'MAX_ALLOWED_HITS_PER_IP') else 2000

# get the hits by this IP in last IP_TIMEOUT time

this_ip_hits = cache.get(ip_cache_key)

if not this_ip_hits:

this_ip_hits = 1

cache.set(ip_cache_key, this_ip_hits, ip_hits_timeout)

else:

this_ip_hits += 1

cache.set(ip_cache_key, this_ip_hits)

# print(this_ip_hits, ip, ip_hits_timeout, max_allowed_hits)

if this_ip_hits > max_allowed_hits:

return HttpResponseForbidden()

else:

response = self.get_response(request)

print("code executed after view")

return response

Don't forget to define below two variables in your settings.py file.

MAX_ALLOWED_HITS_PER_IP = 2000 # max allowed hits per IP_TIMEOUT time from an IP IP_HITS_TIMEOUT = 60 # timeout in seconds for IP in cache

Also we are storing data in cache, so if you haven't defined cache settings in your project, please add below lines to your settings.py file.

CACHES = {

'default': {

'BACKEND': 'django.core.cache.backends.db.DatabaseCache',

'LOCATION': 'cache_table',

}

}

Here we are using DB table as cache backend because Memcached and other backends are not supported on PythonAnyWhere server.

Run the command : python manage.py createcachetable.

We placed our middleware at first place in middlware list. That means before executing anything, our code will check for IP and will block it if requests made by IP is more than threshold.

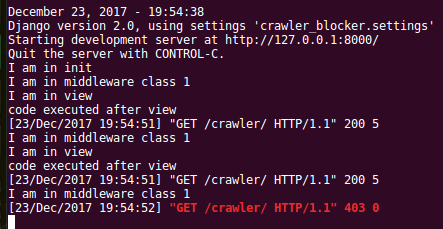

To test on local system, set these values to very low, e.g. IP_HITS_TIMEOUT = 30 and MAX_ALLOWED_HITS_PER_IP = 2.

If variables are not defined in settings file, default values will be used.

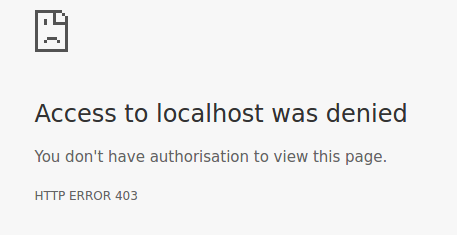

Restart the server and send requests frequently. After two requests you will start receiving 403 error.

403 returned after two requests.

Now our app is ready and working.

Converting your app to python package:

Once tested thoroughly, we can convert our app into python package and upload on pypi and djangopackages.Follow below steps. Take reference from here.

- Create a new directory with the name you want to give to your package. Make sure package name is unique and is not being used already.

- Move your app into this newly created directory. Make sure you remove print statements and unnecessary files.

- Create a README.srt and setup.py file.

- Create a LICENSE file. You may take help from license chooser.

- Create MANIFEST file.

- Install setuptools if not installed already.

- Run the command

python setup.py sdist. This will create a directory called dist and builds your new package, package-name-1.0.tar.gz .

(django_bot_crawler_blocker) rana@Brahma: django-bot-crawler-blocker$ python setup.py sdist running sdist running egg_info writing django_bot_crawler_blocker.egg-info/PKG-INFO writing top-level names to django_bot_crawler_blocker.egg-info/top_level.txt writing dependency_links to django_bot_crawler_blocker.egg-info/dependency_links.txt reading manifest file 'django_bot_crawler_blocker.egg-info/SOURCES.txt' reading manifest template 'MANIFEST.in' writing manifest file 'django_bot_crawler_blocker.egg-info/SOURCES.txt' running check creating django-bot-crawler-blocker-1.0 creating django-bot-crawler-blocker-1.0/django_bot_crawler_blocker creating django-bot-crawler-blocker-1.0/django_bot_crawler_blocker.egg-info copying files to django-bot-crawler-blocker-1.0... copying LICENSE -> django-bot-crawler-blocker-1.0 copying MANIFEST.in -> django-bot-crawler-blocker-1.0 copying README.rst -> django-bot-crawler-blocker-1.0 copying setup.py -> django-bot-crawler-blocker-1.0 copying django_bot_crawler_blocker/__init__.py -> django-bot-crawler-blocker-1.0/django_bot_crawler_blocker copying django_bot_crawler_blocker/apps.py -> django-bot-crawler-blocker-1.0/django_bot_crawler_blocker copying django_bot_crawler_blocker/django_bot_crawler_middleware.py -> django-bot-crawler-blocker-1.0/django_bot_crawler_blocker copying django_bot_crawler_blocker.egg-info/PKG-INFO -> django-bot-crawler-blocker-1.0/django_bot_crawler_blocker.egg-info copying django_bot_crawler_blocker.egg-info/SOURCES.txt -> django-bot-crawler-blocker-1.0/django_bot_crawler_blocker.egg-info copying django_bot_crawler_blocker.egg-info/dependency_links.txt -> django-bot-crawler-blocker-1.0/django_bot_crawler_blocker.egg-info copying django_bot_crawler_blocker.egg-info/top_level.txt -> django-bot-crawler-blocker-1.0/django_bot_crawler_blocker.egg-info Writing django-bot-crawler-blocker-1.0/setup.cfg Creating tar archive removing 'django-bot-crawler-blocker-1.0' (and everything under it)

Uploading your app to pypi python package index:

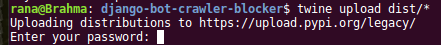

Now to upload your package to pypi, first create account on pypi. We will upload the package using twine .Install twine if not already installed using command sudo apt-get install twine .

Create .pypirc file in home directory. vi ~/.pypirc .

Paste the below content in it.

[distutils] index-servers=pypi [pypi] repository = https://upload.pypi.org/legacy/ username = your-username

Do not store password in this file. Password will be asked when you run below command in root directory of your package.

twine upload dist/*

Once command is successfully executed, your package will be available on pypi.python.org.

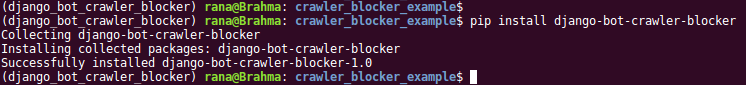

Testing your python package (Django App) in your Django project:

- Create a new Django project. Use virtual environment.

- After virtual environment is created, install the python package using pip. pip install django-bot-crawler-blocker .

- Follow the steps from README file to configure this package.

- Run the server and tweak the values of timeout and max_hits in settings file.

- Share this article among other developers if this worked for you.

Happy learning.

References:

[1] http://docs.python-guide.org/[2] https://choosealicense.com/

[3] https://docs.djangoproject.com